Welcome to the documentation for Apache Parquet.

The specification for the Apache Parquet file format is hosted in the parquet-format repository. The current implementation status of various features can be found in the implementation status page.

This is the multi-page printable view of this section. Click here to print.

Welcome to the documentation for Apache Parquet.

The specification for the Apache Parquet file format is hosted in the parquet-format repository. The current implementation status of various features can be found in the implementation status page.

Apache Parquet is an open source, column-oriented data file format designed for efficient data storage and retrieval. It provides high performance compression and encoding schemes to handle complex data in bulk and is supported in many programming languages and analytics tools.

The parquet-format repository hosts the official specification of the Parquet file format, defining how data is structured and stored. This specification, along with the parquet.thrift Thrift metadata definitions, is necessary for developing software to effectively read and write Parquet files.

Note that the parquet-format repository does not contain source code for libraries to read or write Parquet files, but rather the formal definitions and documentation of the file format itself.

The parquet-java (formerly named parquet-mr) repository is part of the Apache Parquet project and contains:

Note that there are a number of other implementations of the Parquet format, some of which are listed below.

The Parquet ecosystem is rich and varied, encompassing a wide array of tools, libraries, and clients, each offering different levels of feature support. It’s important to note that not all implementations support the same features of the Parquet format. When integrating multiple Parquet implementations within your workflow, it is crucial to conduct thorough testing to ensure compatibility and performance across different platforms and tools.

You can find more information about the feature support of various Parquet implementations on the implementation status page.

Here is a non-exhaustive list of open source Parquet implementations:

We created Parquet to make the advantages of compressed, efficient columnar data representation available to any project in the Hadoop ecosystem.

Parquet is built from the ground up with complex nested data structures in mind, and uses the record shredding and assembly algorithm described in the Dremel paper. We believe this approach is superior to simple flattening of nested name spaces.

Parquet is built to support very efficient compression and encoding schemes. Multiple projects have demonstrated the performance impact of applying the right compression and encoding scheme to the data. Parquet allows compression schemes to be specified on a per-column level, and is future-proofed to allow adding more encodings as they are invented and implemented.

Parquet is built to be used by anyone. The Hadoop ecosystem is rich with data processing frameworks, and we are not interested in playing favorites. We believe that an efficient, well-implemented columnar storage substrate should be useful to all frameworks without the cost of extensive and difficult to set up dependencies.

Block (HDFS block): This means a block in HDFS and the meaning is unchanged for describing this file format. The file format is designed to work well on top of HDFS.

File: A HDFS file that must include the metadata for the file. It does not need to actually contain the data.

Row group: A logical horizontal partitioning of the data into rows. There is no physical structure that is guaranteed for a row group. A row group consists of a column chunk for each column in the dataset.

Column chunk: A chunk of the data for a particular column. They live in a particular row group and are guaranteed to be contiguous in the file.

Page: Column chunks are divided up into pages. A page is conceptually an indivisible unit (in terms of compression and encoding). There can be multiple page types which are interleaved in a column chunk.

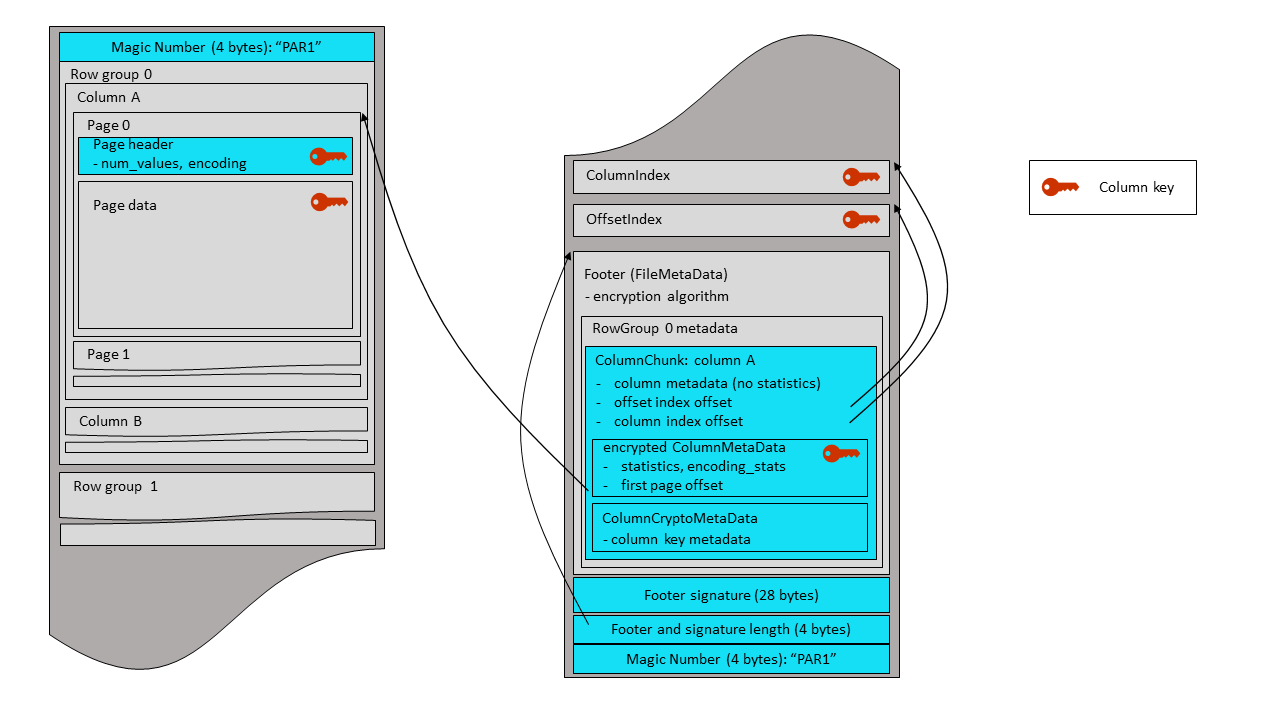

Hierarchically, a file consists of one or more row groups. A row group contains exactly one column chunk per column. Column chunks contain one or more pages.

This file and the thrift definition should be read together to understand the format.

4-byte magic number "PAR1"

<Column 1 Chunk 1>

<Column 2 Chunk 1>

...

<Column N Chunk 1>

<Column 1 Chunk 2>

<Column 2 Chunk 2>

...

<Column N Chunk 2>

...

<Column 1 Chunk M>

<Column 2 Chunk M>

...

<Column N Chunk M>

File Metadata

4-byte length in bytes of file metadata (little endian)

4-byte magic number "PAR1"

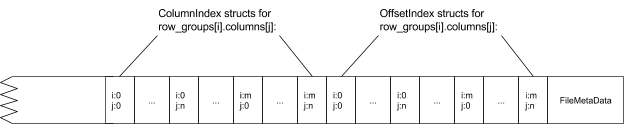

In the above example, there are N columns in this table, split into M row groups. The file metadata contains the locations of all the column chunk start locations. More details on what is contained in the metadata can be found in the Thrift definition.

File metadata is written after the data to allow for single pass writing.

Readers are expected to first read the file metadata to find all the column chunks they are interested in. The columns chunks should then be read sequentially.

The format is explicitly designed to separate the metadata from the data. This allows splitting columns into multiple files, as well as having a single metadata file reference multiple parquet files.

The extension mechanism of the binary Thrift field-id 32767 has some desirable properties:

Because only one field-id is reserved the extension bytes themselves require disambiguation; otherwise readers will not be able to decode extensions safely. This is left to implementers which MUST put enough unique state in their extension bytes for disambiguation. This can be relatively easily achieved by adding a UUID at the start or end of the extension bytes. The extension does not specify a disambiguation mechanism to allow more flexibility to implementers.

Putting everything together in an example, if we would extend FileMetaData it would look like this on the wire.

N-1 bytes | Thrift compact protocol encoded FileMetadata (minus \0 thrift stop field)

4 bytes | 08 FF FF 01 (long form header for 32767: binary)

1-5 bytes | ULEB128(M) encoded size of the extension

M bytes | extension bytes

1 byte | \0 (thrift stop field)

The choice to reserve only one field-id has an additional (and frankly unintended) property. It creates scarcity in the extension space and disincentivizes vendors from keeping their extensions private. As a vendor having an extension means one cannot use it in tandem with other extensions from other vendors even if such extensions are publicly known. The easiest path of interoperability and ability to further experiment is to push an extension through standardization and continue experimenting with other ideas internally on top of the (now) standardized version.

So far the above specification shows how different vendors can add extensions without stepping on each other’s toes. As long as extensions are private this works out ok.

Unavoidably (and desirably) some extensions will make it into the official specification. Depending on the nature of the extension, migration can take different paths. While it is out of the scope of this document to design all such migrations, we illustrate some of these paths in the examples.

To illustrate the applicability of the extension mechanism we provide examples of fictional extensions to Parquet and how migration can play out if/when the community decides to adopt them in the official specification.

A variant of FileMetaData encoded in Flatbuffers is introduced. This variant is more performant and can scale to very wide tables, something that current Thrift FileMetaData struggles with.

In its private form the footer of a Parquet file will look like so:

N-1 bytes | Thrift compact protocol encoded FileMetadata (minus \0 thrift stop field)

4 bytes | 08 FF FF 01 (long form header for 32767: binary)

1-5 bytes | ULEB128(K+28) encoded size of the extension

K bytes | Flatbuffers representation (v0) of FileMetaData

4 bytes | little-endian crc32(flatbuffer)

4 bytes | little-endian size(flatbuffer)

4 bytes | little-endian crc32(size(flatbuffer))

16 bytes | some-UUID

1 byte | \0 (thrift stop field)

4 bytes | PAR1

some-UUID is some UUID picked for this extension and it is used throughout (possibly internal) experimentation. It is put at the end to allow detection of the extension when parsed in reverse. The little-endian sizes and crc32s are also to the end to facilitate efficient parsing the footer in reverse without requiring parsing the Thrift compact protocol that precedes it.

At some point the experiments conclude and the extension shared publicly with the community. The extension is proposed for inclusion to the standard with a migration plan to replace the existing FileMetaData.

The community reviews the proposal and (potentially) proposes changes to the Flatbuffers IDL representation. In addition, because this extension is a replacement of an existing struct, it must:

FileMetaData and the FlatBuffers FileMetaData will be present.32767: binary may not be present.Once the design is ratified the new FileMetaData encoding is made final with the following migration plan. For the next N years writers will write both the Thrift and the flatbuffer FileMetaData. It will look much like its private form except the flatbuffer IDL may be different:

N-1 bytes | Thrift compact protocol encoded FileMetadata (minus \0 thrift stop field)

4 bytes | 08 FF FF 01 (long form header for 32767: binary)

1-5 bytes | ULEB128(K+28) encoded size of the extension

K bytes | Flatbuffers representation (v1) of FileMetaData

4 bytes | little-endian crc32(flatbuffer)

4 bytes | little-endian size(flatbuffer)

4 bytes | little-endian crc32(size(flatbuffer))

16 bytes | some-other-UUID

1 byte | \0 (thrift stop field)

4 bytes | PAR1

After the migration period, the end of the Parquet file may look like this:

K bytes | Flatbuffers representation (v1) of FileMetaData

4 bytes | little-endian crc32(flatbuffer)

4 bytes | little-endian size(flatbuffer)

4 bytes | little-endian crc32(size(flatbuffer))

4 bytes | PAR3

In this example, we see several design decisions for the extension at play:

FileMetaData cannot be extended itself.The community experiments with a new encoding extension. At the same time they want to keep the newly encoded Parquet files open for everyone to read. So they add a new encoding via an extension to the ColumnMetaData struct. The extension stores offsets in the Parquet file where the new and duplicate encoded data for this column lives. The new writer carefully places all the new encodings at the start of the row group and all the old encodings at the end of the row group. This layout minimizes disruption for readers unaware of the new encodings.

In its private form Parquet files look like so:

4 bytes | PAR1

| | Column b (new encoding)

| | Column c (new encoding)

R bytes | Row Group | Column a

| 0 | Column d

| | Column b (old encoding)

| | Column c (old encoding)

| | FileMetaData

| | ColumnMetaData: a

| | ColumnMetaData: b

F bytes | | <extension-blob with offsets to new encoding>

| | ColumnMetaData: c

| | <extension-blob with offsets to new encoding>

| | ColumnMetaData: d

4 bytes | PAR1

The custom reader is compiled with thrift IDL with a binary for field with id 32767. This is done to become extension aware and inspect the extension bytes looking for the UUID disambiguator. If that’s found it decodes the offsets from the rest of the bytes and reads the region of the file containing the new encoding.

If/when the encoding is ratified, it is added to the official specification as an additional type in Encodings at which point the extension is no longer necessary, nor the duplicated data in the row group.

void AppendUleb(uint32_t x, std::string* out) {

while (true) {

uint8_t c = x & 0x7F;

if (x < 0x80) return out->push_back(c);

out->push_back(c + 0x80);

x >>= 7;

}

};

std::string AppendExtension(std::string thrift, const std::string& ext) {

assert(thrift.back() == '\x00'); // there was a stop field in the first place

thrift.back() = '\x08'; // replace stop field with binary type

AppendUleb(32767, &thrift); // field-id

AppendUleb(ext.size(), &thrift);

thrift += ext;

thrift += '\x00'; // add the stop field

return thrift;

}

Larger row groups allow for larger column chunks which makes it possible to do larger sequential IO. Larger groups also require more buffering in the write path (or a two pass write). We recommend large row groups (512MB - 1GB). Since an entire row group might need to be read, we want it to completely fit on one HDFS block. Therefore, HDFS block sizes should also be set to be larger. An optimized read setup would be: 1GB row groups, 1GB HDFS block size, 1 HDFS block per HDFS file.

Data pages should be considered indivisible so smaller data pages allow for more fine grained reading (e.g. single row lookup). Larger page sizes incur less space overhead (less page headers) and potentially less parsing overhead (processing headers). Note: for sequential scans, it is not expected to read a page at a time; this is not the IO chunk. We recommend 8KB for page sizes.

There are many places in the format for compatible extensions:

There are two types of metadata: file metadata, and page header metadata.

All thrift structures are serialized using the TCompactProtocol. The full definition of these structures is given in the Parquet Thrift definition.

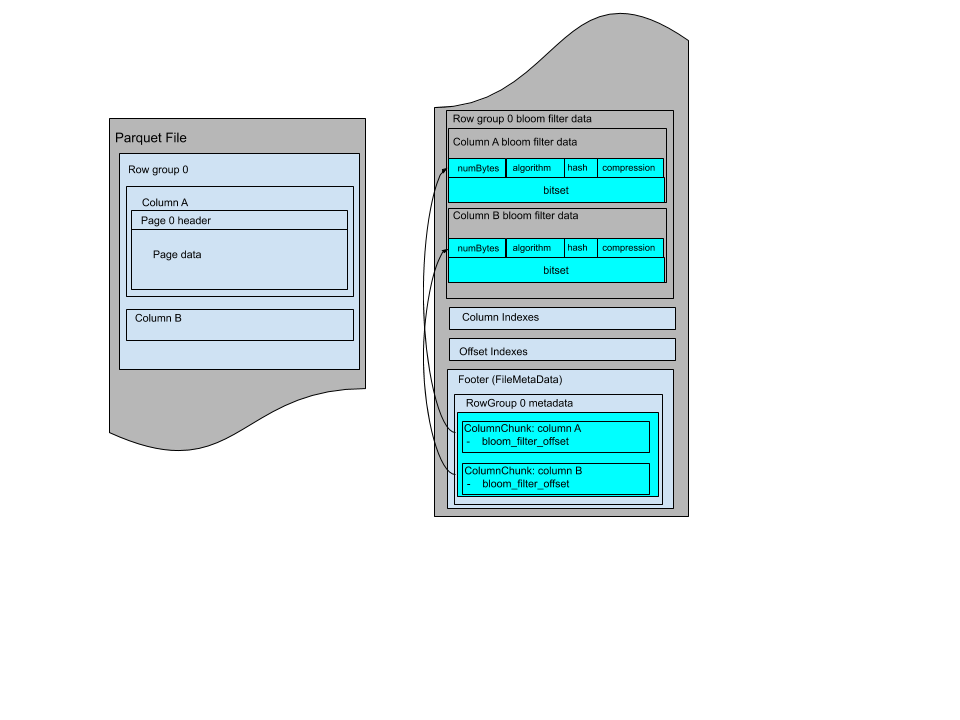

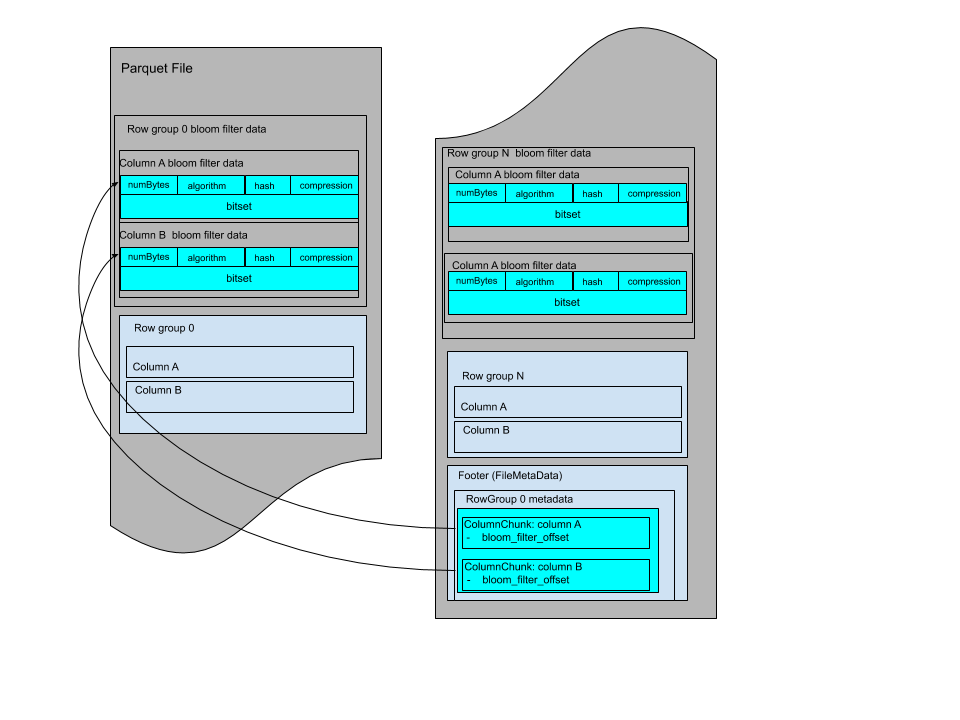

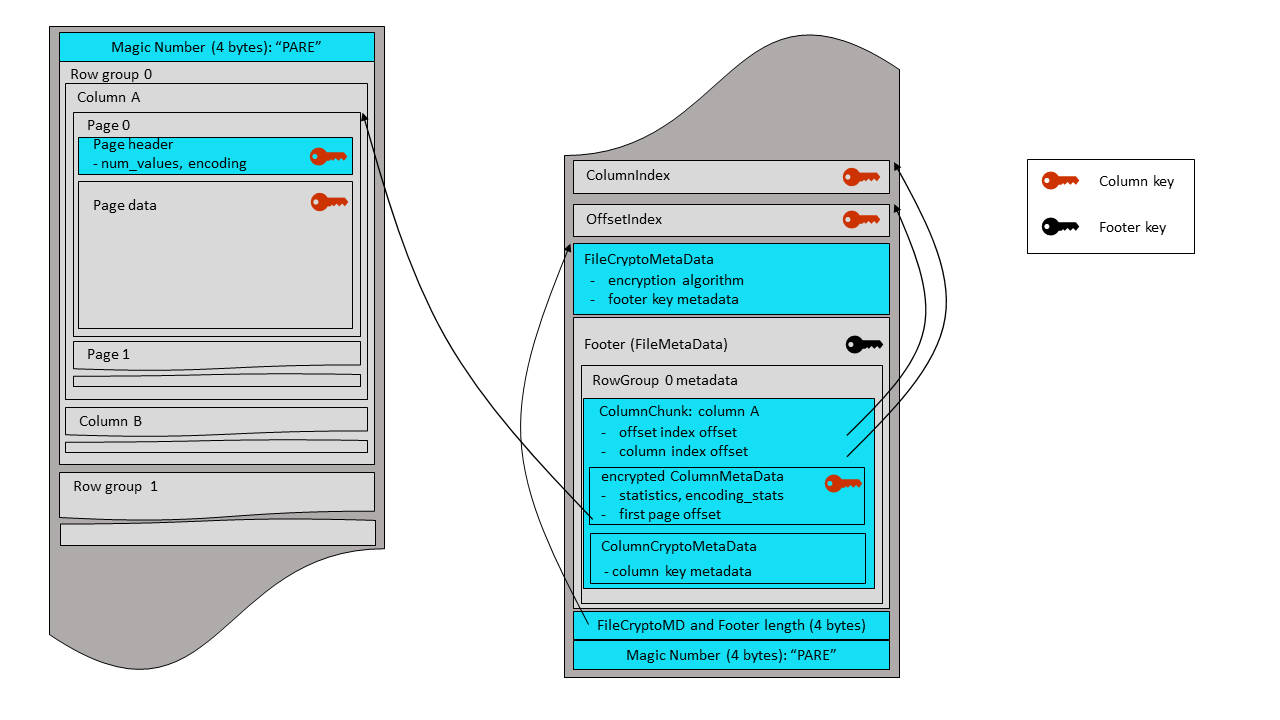

In the diagram below, file metadata is described by the FileMetaData

structure. This file metadata provides offset and size information useful

when navigating the Parquet file.

Page header metadata (PageHeader and children in the diagram) is stored

in-line with the page data, and is used in the reading and decoding of data.

The types supported by the file format are intended to be as minimal as possible, with a focus on how the types effect on disk storage. For example, 16-bit ints are not explicitly supported in the storage format since they are covered by 32-bit ints with an efficient encoding. This reduces the complexity of implementing readers and writers for the format. The types are:

- BOOLEAN: 1 bit boolean

- INT32: 32 bit signed ints

- INT64: 64 bit signed ints

- INT96: 96 bit signed ints (deprecated; only used by legacy implementations)

- FLOAT: IEEE 32-bit floating point values

- DOUBLE: IEEE 64-bit floating point values

- BYTE_ARRAY: arbitrarily long byte arrays

- FIXED_LEN_BYTE_ARRAY: fixed length byte arrays

This document contains the specification of geospatial types and statistics.

The Geometry and Geography class hierarchy and its Well-Known Text (WKT) and Well-Known Binary (WKB) serializations (ISO variant supporting XY, XYZ, XYM, XYZM) are defined by OpenGIS Implementation Specification for Geographic information - Simple feature access - Part 1: Common architecture, from OGC(Open Geospatial Consortium).

The version of the OGC standard first used here is 1.2.1, but future versions may also be used if the WKB representation remains wire-compatible.

Coordinate Reference System (CRS) is a mapping of how coordinates refer to locations on Earth.

The default CRS OGC:CRS84 means that the geospatial features must be stored

in the order of longitude/latitude based on the WGS84 datum.

Custom CRS can be specified by a string value. It is recommended to use an identifier-based approach like Spatial reference identifier.

For geographic CRS, longitudes are bound by [-180, 180] and latitudes are bound by [-90, 90].

An algorithm for interpolating edges, and is one of the following values:

spherical: edges are interpolated as geodesics on a sphere.vincenty: https://en.wikipedia.org/wiki/Vincenty%27s_formulaethomas: Thomas, Paul D. Spheroidal geodesics, reference systems, & local geometry. US Naval Oceanographic Office, 1970.andoyer: Thomas, Paul D. Mathematical models for navigation systems. US Naval Oceanographic Office, 1965.karney: Karney, Charles FF. “Algorithms for geodesics.” Journal of Geodesy 87 (2013): 43-55, and GeographicLibTwo geospatial logical type annotations are supported:

GEOMETRY: geospatial features in the WKB format with linear/planar edges interpolation. See GeometryGEOGRAPHY: geospatial features in the WKB format with an explicit (non-linear/non-planar) edges interpolation algorithm. See GeographyGeospatialStatistics is a struct specific for GEOMETRY and GEOGRAPHY

logical types to store statistics of a column chunk. It is an optional field in

the ColumnMetaData and contains Bounding Box and Geospatial

Types that are described below in detail.

A geospatial instance has at least two coordinate dimensions: X and Y for 2D coordinates of each point. Please note that X is longitude/easting and Y is latitude/northing. A geospatial instance can optionally have Z and/or M values associated with each point.

The Z values introduce the third dimension coordinate. Usually they are used to indicate the height, or elevation.

M values are an opportunity for a geospatial instance to track a value in a fourth dimension. These values can be used as a linear reference value (e.g., highway milepost value), a timestamp, or some other value as defined by the CRS.

Bounding box is defined as the thrift struct below in the representation of min/max value pair of coordinates from each axis. Note that X and Y Values are always present. Z and M are omitted for 2D geospatial instances.

When calculating a bounding box, null or NaN values in a coordinate

dimension are skipped. For example, POINT (1 NaN) contributes a value to X

but no values to Y, Z, or M dimension of the bounding box. If a dimension has

only null or NaN values, that dimension is omitted from the bounding box. If

either the X or Y dimension is missing, then the bounding box itself is not

produced.

For the X values only, xmin may be greater than xmax. In this case, an object

in this bounding box may match if it contains an X such that x >= xmin OR

x <= xmax. This wraparound occurs only when the corresponding bounding box

crosses the antimeridian line. In geographic terminology, the concepts of xmin,

xmax, ymin, and ymax are also known as westernmost, easternmost,

southernmost and northernmost, respectively.

For GEOGRAPHY types, X and Y values are restricted to the canonical ranges of

[-180, 180] for X and [-90, 90] for Y.

struct BoundingBox {

1: required double xmin;

2: required double xmax;

3: required double ymin;

4: required double ymax;

5: optional double zmin;

6: optional double zmax;

7: optional double mmin;

8: optional double mmax;

}

A list of geospatial types from all instances in the GEOMETRY or GEOGRAPHY

column, or an empty list if they are not known.

This is borrowed from geometry_types of GeoParquet except that values in the list are WKB (ISO-variant) integer codes. Table below shows the most common geospatial types and their codes:

| Type | XY | XYZ | XYM | XYZM |

|---|---|---|---|---|

| Point | 0001 | 1001 | 2001 | 3001 |

| LineString | 0002 | 1002 | 2002 | 3002 |

| Polygon | 0003 | 1003 | 2003 | 3003 |

| MultiPoint | 0004 | 1004 | 2004 | 3004 |

| MultiLineString | 0005 | 1005 | 2005 | 3005 |

| MultiPolygon | 0006 | 1006 | 2006 | 3006 |

| GeometryCollection | 0007 | 1007 | 2007 | 3007 |

In addition, the following rules are applied:

[0003, 0006]).[0001, 0001] is not valid).CRS is represented as a string value. Writer and reader implementations are responsible for serializing and deserializing the CRS, respectively.

As a convention to maximize the interoperability, custom CRS values can be

specified by a string of the format type:identifier, where type is one of

the following values:

srid: Spatial reference identifier, identifier is the SRID itself.projjson: PROJJSON, identifier is the name of a table property or a file property where the projjson string is stored.The axis order of the coordinates in WKB and bounding box stored in Parquet follows the de facto standard for axis order in WKB and is therefore always (x, y) where x is easting or longitude and y is northing or latitude. This ordering explicitly overrides the axis order as specified in the CRS.

Logical types are used to extend the types that parquet can be used to store,

by specifying how the primitive types should be interpreted. This keeps the set

of primitive types to a minimum and reuses parquet’s efficient encodings. For

example, strings are stored with the primitive type BYTE_ARRAY with a STRING

annotation.

This file contains the specification for all logical types.

The parquet format’s LogicalType stores the type annotation. The annotation

may require additional metadata fields, as well as rules for those fields.

There is an older representation of the logical type annotations called ConvertedType.

To support backward compatibility with old files, readers should interpret LogicalTypes

in the same way as ConvertedType, and writers should populate ConvertedType in the metadata

according to well defined conversion rules.

The Thrift definition of the metadata has two fields for logical types: ConvertedType and LogicalType.

ConvertedType is an enum of all available annotations. Since Thrift enums can’t have additional type parameters,

it is cumbersome to define additional type parameters, like decimal scale and precision

(which are additional 32 bit integer fields on SchemaElement, and are relevant only for decimals) or time unit

and UTC adjustment flag for Timestamp types. To overcome this problem, a new logical type representation was introduced into

the metadata to replace ConvertedType: LogicalType. The new representation is a union of structs of logical types,

this way allowing more flexible API, logical types can have type parameters.

ConvertedType is deprecated. However, to maintain compatibility with old writers,

Parquet readers should be able to read and interpret ConvertedType annotations

in case LogicalType annotations are not present. Parquet writers must always write

LogicalType annotations where applicable, but must also write the corresponding

ConvertedType annotations (if any) to maintain compatibility with old readers.

Compatibility considerations are mentioned for each annotation in the corresponding section.

STRING may only be used to annotate the BYTE_ARRAY primitive type and indicates

that the byte array should be interpreted as a UTF-8 encoded character string.

The sort order used for STRING strings is unsigned byte-wise comparison.

Compatibility

STRING corresponds to UTF8 ConvertedType.

ENUM annotates the BYTE_ARRAY primitive type and indicates that the value

was converted from an enumerated type in another data model (e.g. Thrift, Avro, Protobuf).

Applications using a data model lacking a native enum type should interpret ENUM

annotated field as a UTF-8 encoded string.

The sort order used for ENUM values is unsigned byte-wise comparison.

UUID annotates a 16-byte FIXED_LEN_BYTE_ARRAY primitive type. The value is

encoded using big-endian, so that 00112233-4455-6677-8899-aabbccddeeff is encoded

as the bytes 00 11 22 33 44 55 66 77 88 99 aa bb cc dd ee ff

(This example is from wikipedia’s UUID page).

The sort order used for UUID values is unsigned byte-wise comparison.

INT annotation can be used to specify the maximum number of bits in the stored value.

The annotation has two parameters: bit width and sign.

Allowed bit width values are 8, 16, 32, 64, and sign can be true or false.

For signed integers, the second parameter should be true,

for example, a signed integer with bit width of 8 is defined as INT(8, true)

Implementations may use these annotations to produce smaller

in-memory representations when reading data.

If a stored value is larger than the maximum allowed by the annotation, the behavior is not defined and can be determined by the implementation. Implementations must not write values that are larger than the annotation allows.

INT(8, true), INT(16, true), and INT(32, true) must annotate an int32 primitive type and

INT(64, true) must annotate an int64 primitive type. INT(32, true) and INT(64, true) are

implied by the int32 and int64 primitive types if no other annotation is

present and should be considered optional.

The sort order used for signed integer types is signed.

INT annotation can be used to specify unsigned integer types,

along with a maximum number of bits in the stored value.

The annotation has two parameters: bit width and sign.

Allowed bit width values are 8, 16, 32, 64, and sign can be true or false.

In case of unsigned integers, the second parameter should be false,

for example, an unsigned integer with bit width of 8 is defined as INT(8, false)

Implementations may use these annotations to produce smaller

in-memory representations when reading data.

If a stored value is larger than the maximum allowed by the annotation, the behavior is not defined and can be determined by the implementation. Implementations must not write values that are larger than the annotation allows.

INT(8, false), INT(16, false), and INT(32, false) must annotate an int32 primitive type and

INT(64, false) must annotate an int64 primitive type.

The sort order used for unsigned integer types is unsigned.

INT_8, INT_16, INT_32, and INT_64 annotations can be also used to specify

signed integers with 8, 16, 32, or 64 bit width.

INT_8, INT_16, and INT_32 must annotate an int32 primitive type and

INT_64 must annotate an int64 primitive type. INT_32 and INT_64 are

implied by the int32 and int64 primitive types if no other annotation is

present and should be considered optional.

UINT_8, UINT_16, UINT_32, and UINT_64 annotations can be also used to specify

unsigned integers with 8, 16, 32, or 64 bit width.

UINT_8, UINT_16, and UINT_32 must annotate an int32 primitive type and

UINT_64 must annotate an int64 primitive type.

Backward compatibility:

| ConvertedType | LogicalType |

|---|---|

| INT_8 | IntType (bitWidth = 8, isSigned = true) |

| INT_16 | IntType (bitWidth = 16, isSigned = true) |

| INT_32 | IntType (bitWidth = 32, isSigned = true) |

| INT_64 | IntType (bitWidth = 64, isSigned = true) |

| UINT_8 | IntType (bitWidth = 8, isSigned = false) |

| UINT_16 | IntType (bitWidth = 16, isSigned = false) |

| UINT_32 | IntType (bitWidth = 32, isSigned = false) |

| UINT_64 | IntType (bitWidth = 64, isSigned = false) |

Forward compatibility:

| LogicalType | ConvertedType | ||

|---|---|---|---|

| IntType | isSigned | bitWidth = 8 | INT_8 |

| bitWidth = 16 | INT_16 | ||

| bitWidth = 32 | INT_32 | ||

| bitWidth = 64 | INT_64 | ||

| !isSigned | bitWidth = 8 | UINT_8 | |

| bitWidth = 16 | UINT_16 | ||

| bitWidth = 32 | UINT_32 | ||

| bitWidth = 64 | UINT_64 | ||

DECIMAL annotation represents arbitrary-precision signed decimal numbers of

the form unscaledValue * 10^(-scale).

The primitive type stores an unscaled integer value. For BYTE_ARRAY and

FIXED_LEN_BYTE_ARRAY, the unscaled number must be encoded as two’s complement using

big-endian byte order (the most significant byte is the zeroth element). The

scale stores the number of digits of that value that are to the right of the

decimal point, and the precision stores the maximum number of digits supported

in the unscaled value.

If not specified, the scale is 0. Scale must be zero or a positive integer less than or equal to the precision. Precision is required and must be a non-zero positive integer. A precision too large for the underlying type (see below) is an error.

DECIMAL can be used to annotate the following types:

int32: for 1 <= precision <= 9int64: for 1 <= precision <= 18; precision < 10 will produce a

warningfixed_len_byte_array: precision is limited by the array size. Length n

can store <= floor(log_10(2^(8*n - 1) - 1)) base-10 digitsbyte_array: precision is not limited, but is required. The minimum number of

bytes to store the unscaled value should be used.The sort order used for DECIMAL values is signed comparison of the represented

value.

If the column uses int32 or int64 physical types, then signed comparison of

the integer values produces the correct ordering. If the physical type is

fixed, then the correct ordering can be produced by flipping the

most-significant bit in the first byte and then using unsigned byte-wise

comparison.

Compatibility

To support compatibility with older readers, implementations of parquet-format should

write DecimalType precision and scale into the corresponding SchemaElement field in metadata.

The FLOAT16 annotation represents half-precision floating-point numbers in the 2-byte IEEE little-endian format.

Used in contexts where precision is traded off for smaller footprint and potentially better performance.

The primitive type is a 2-byte FIXED_LEN_BYTE_ARRAY.

The sort order for FLOAT16 is signed (with special handling of NANs and signed zeros); it uses the same logic as FLOAT and DOUBLE.

DATE is used for a logical date type, without a time of day. It must

annotate an int32 that stores the number of days from the Unix epoch, 1

January 1970.

The sort order used for DATE is signed.

TIME is used for a logical time type without a date with millisecond or microsecond precision.

The type has two type parameters: UTC adjustment (true or false)

and unit (MILLIS or MICROS, NANOS).

TIME with unit MILLIS is used for millisecond precision.

It must annotate an int32 that stores the number of

milliseconds after midnight.

TIME with unit MICROS is used for microsecond precision.

It must annotate an int64 that stores the number of

microseconds after midnight.

TIME with unit NANOS is used for nanosecond precision.

It must annotate an int64 that stores the number of

nanoseconds after midnight.

The sort order used for TIME is signed.

TIME_MILLIS is the deprecated ConvertedType counterpart of a TIME logical

type that is UTC normalized and has MILLIS precision. Like the logical type

counterpart, it must annotate an int32.

TIME_MICROS is the deprecated ConvertedType counterpart of a TIME logical

type that is UTC normalized and has MICROS precision. Like the logical type

counterpart, it must annotate an int64.

Despite there is no exact corresponding ConvertedType for local time semantic,

in order to support forward compatibility with those libraries, which annotated

their local time with legacy TIME_MICROS and TIME_MILLIS annotation,

Parquet writer implementation must annotate local time with legacy annotations too,

as shown below.

Backward compatibility:

| ConvertedType | LogicalType |

|---|---|

| TIME_MILLIS | TimeType (isAdjustedToUTC = true, unit = MILLIS) |

| TIME_MICROS | TimeType (isAdjustedToUTC = true, unit = MICROS) |

Forward compatibility:

| LogicalType | ConvertedType | ||

|---|---|---|---|

| TimeType | isAdjustedToUTC = true | unit = MILLIS | TIME_MILLIS |

| unit = MICROS | TIME_MICROS | ||

| unit = NANOS | - | ||

| isAdjustedToUTC = false | unit = MILLIS | TIME_MILLIS | |

| unit = MICROS | TIME_MICROS | ||

| unit = NANOS | - | ||

In data annotated with the TIMESTAMP logical type, each value is a single

int64 number that can be decoded into year, month, day, hour, minute, second

and subsecond fields using calculations detailed below. Please note that a value

defined this way does not necessarily correspond to a single instant on the

time-line and such interpretations are allowed on purpose.

The TIMESTAMP type has two type parameters:

isAdjustedToUTC must be either true or false.unit must be one of MILLIS, MICROS or NANOS. This list is subject

to potential expansion in the future. Upon reading, unknown unit-s must

be handled as unsupported features (rather than as errors in the data files).A TIMESTAMP with isAdjustedToUTC=true is defined as the number of

milliseconds, microseconds or nanoseconds (depending on the unit

parameter being MILLIS, MICROS or NANOS, respectively) elapsed since the

Unix epoch, 1970-01-01 00:00:00 UTC. Each such value unambiguously identifies a

single instant on the time-line.

For example, in a TIMESTAMP(isAdjustedToUTC=true, unit=MILLIS), the

number 172800000 corresponds to 1970-01-03 00:00:00 UTC, because it is equal to

2 * 24 * 60 * 60 * 1000, therefore it is exactly two days from the reference

point, the Unix epoch. In Java, this calculation can be achieved by calling

Instant.ofEpochMilli(172800000).

As a slightly more complicated example, if one wants to store 1970-01-03

00:00:00 (UTC+01:00) as a TIMESTAMP(isAdjustedToUTC=true, unit=MILLIS),

first the time zone offset has to be dealt with. By normalizing the timestamp to

UTC, we calculate what time in UTC corresponds to the same instant: 1970-01-02

23:00:00 UTC. This is 1 day and 23 hours after the epoch, therefore it can be

encoded as the number (24 + 23) * 60 * 60 * 1000 = 169200000.

Please note that time zone information gets lost in this process. Upon reading a value back, we can only reconstruct the instant, but not the original representation. In practice, such timestamps are typically displayed to users in their local time zones, therefore they may be displayed differently depending on the execution environment.

A TIMESTAMP with isAdjustedToUTC=false represents year, month, day, hour,

minute, second and subsecond fields in a local timezone, regardless of what

specific time zone is considered local. This means that such timestamps should

always be displayed the same way, regardless of the local time zone in effect.

On the other hand, without additional information such as an offset or

time-zone, these values do not identify instants on the time-line unambiguously,

because the corresponding instants would depend on the local time zone.

Using a single number to represent a local timestamp is a lot less intuitive than for instants. One must use a local timestamp as the reference point and calculate the elapsed time between the actual timestamp and the reference point. The problem is that the result may depend on the local time zone, for example because there may have been a daylight saving time change between the two timestamps.

The solution to this problem is to use a simplification that makes the result

easy to calculate and independent of the timezone. Treating every day as

consisting of exactly 86400 seconds and ignoring DST changes altogether allows

us to unambiguously represent a local timestamp as a difference from a reference

local timestamp. We define the reference local timestamp to be 1970-01-01

00:00:00 (note the lack of UTC at the end, as this is not an instant). This way

the encoding of local timestamp values becomes very similar to the encoding of

instant values. For example, in a TIMESTAMP(isAdjustedToUTC=false, unit=MILLIS), the number 172800000 corresponds to 1970-01-03 00:00:00

(note the lack of UTC at the end), because it is exactly two days from the

reference point (172800000 = 2 * 24 * 60 * 60 * 1000).

Another way to get to the same definition is to treat the local timestamp value

as if it were in UTC and store it as an instant. For example, if we treat the

local timestamp 1970-01-03 00:00:00 as if it were the instant 1970-01-03

00:00:00 UTC, we can store it as 172800000. When reading 172800000 back, we can

reconstruct the instant 1970-01-03 00:00:00 UTC and convert it to a local

timestamp as if we were in the UTC time zone, resulting in 1970-01-03

00:00:00. In Java, this can be achieved by calling

LocalDateTime.ofEpochSecond(172800, 0, ZoneOffset.UTC).

Please note that while from a practical point of view this second definition is equivalent to the first one, from a theoretical point of view only the first definition can be considered correct, the second one just “incidentally” leads to the same results. Nevertheless, this second definition is worth mentioning as well, because it is relatively widespread and it can lead to confusion, especially due to its usage of UTC in the calculations. One can stumble upon code, comments and specifications ambiguously stating that a timestamp “is stored in UTC”. In some contexts, it means that it is normalized to UTC and acts as an instant. In some other contexts though, it means the exact opposite, namely that the timestamp is stored as if it were in UTC and acts as a local timestamp in reality.

Every possible int64 number represents a valid timestamp, but depending on the

precision, the corresponding year may be outside of the practical everyday

limits and implementations may choose to only support a limited range.

On the other hand, not every combination of year, month, day, hour, minute,

second and subsecond values can be encoded into an int64. Most notably:

int64 type, timestamps using the NANOS unit

can only represent values between 1677-09-21 00:12:43 and 2262-04-11 23:47:16.

Values outside of this range can not be represented with the NANOS

unit. (Other precisions have similar limits but those are outside of the

domain for practical everyday usage.)The sort order used for TIMESTAMP is signed.

TIMESTAMP_MILLIS is the deprecated ConvertedType counterpart of a TIMESTAMP

logical type that is UTC normalized and has MILLIS precision. Like the logical

type counterpart, it must annotate an int64.

TIMESTAMP_MICROS is the deprecated ConvertedType counterpart of a TIMESTAMP

logical type that is UTC normalized and has MICROS precision. Like the logical

type counterpart, it must annotate an int64.

Despite there is no exact corresponding ConvertedType for local timestamp semantic,

in order to support forward compatibility with those libraries, which annotated

their local timestamps with legacy TIMESTAMP_MICROS and TIMESTAMP_MILLIS annotation,

Parquet writer implementation must annotate local timestamps with legacy annotations too,

as shown below.

Backward compatibility:

| ConvertedType | LogicalType |

|---|---|

| TIMESTAMP_MILLIS | TimestampType (isAdjustedToUTC = true, unit = MILLIS) |

| TIMESTAMP_MICROS | TimestampType (isAdjustedToUTC = true, unit = MICROS) |

Forward compatibility:

| LogicalType | ConvertedType | ||

|---|---|---|---|

| TimestampType | isAdjustedToUTC = true | unit = MILLIS | TIMESTAMP_MILLIS |

| unit = MICROS | TIMESTAMP_MICROS | ||

| unit = NANOS | - | ||

| isAdjustedToUTC = false | unit = MILLIS | TIMESTAMP_MILLIS | |

| unit = MICROS | TIMESTAMP_MICROS | ||

| unit = NANOS | - | ||

INTERVAL is used for an interval of time. It must annotate a

fixed_len_byte_array of length 12. This array stores three little-endian

unsigned integers that represent durations at different granularities of time.

The first stores a number in months, the second stores a number in days, and

the third stores a number in milliseconds. This representation is independent

of any particular timezone or date.

Each component in this representation is independent of the others. For example, there is no requirement that a large number of days should be expressed as a mix of months and days because there is not a constant conversion from days to months.

The sort order used for INTERVAL is undefined. When writing data, no min/max

statistics should be saved for this type and if such non-compliant statistics

are found during reading, they must be ignored.

Embedded types do not have type-specific orderings.

JSON is used for an embedded JSON document. It must annotate a BYTE_ARRAY

primitive type. The BYTE_ARRAY data is interpreted as a UTF-8 encoded character

string of valid JSON as defined by the JSON specification

The sort order used for JSON is unsigned byte-wise comparison.

BSON is used for an embedded BSON document. It must annotate a BYTE_ARRAY

primitive type. The BYTE_ARRAY data is interpreted as an encoded BSON document as

defined by the BSON specification.

The sort order used for BSON is unsigned byte-wise comparison.

VARIANT is used for a Variant value. It must annotate a group. The group must

contain a field named metadata and a field named value. Both fields must have

type binary, which is also called BYTE_ARRAY in the Parquet thrift definition.

The VARIANT annotated group can be used to store either an unshredded Variant

value, or a shredded Variant value.

VARIANT logical type, with the version number

included in the declaration.value and metadata must be of type binary (called BYTE_ARRAY

in the Parquet thrift definition).metadata field is required and must be a valid Variant metadata component,

as defined by the Variant binary encoding specification.value field must be a valid Variant value component,

as defined by the Variant binary encoding specification.value field is required for unshredded Variant values.value field is optional and may be null only when parts of the Variant

value are shredded according to the Variant shredding specification.This is the expected representation of an unshredded Variant in Parquet:

optional group variant_unshredded (VARIANT(1)) {

required binary metadata;

required binary value;

}

This is an example representation of a shredded Variant in Parquet:

optional group variant_shredded (VARIANT(1)) {

required binary metadata;

optional binary value;

optional int64 typed_value;

}

GEOMETRY is used for geospatial features in the Well-Known Binary (WKB) format

with linear/planar edges interpolation. It must annotate a BYTE_ARRAY

primitive type. See Geospatial.md for more detail.

The type has only one type parameter:

crs: An optional string value for CRS. If unset, the CRS defaults to

"OGC:CRS84", which means that the geometries must be stored in longitude,

latitude based on the WGS84 datum.The sort order used for GEOMETRY is undefined. When writing data, no min/max

statistics should be saved for this type and if such non-compliant statistics

are found during reading, they must be ignored.

GEOGRAPHY is used for geospatial features in the WKB format with an explicit

(non-linear/non-planar) edges interpolation algorithm. It must annotate a

BYTE_ARRAY primitive type. See Geospatial.md for more detail.

The type has two type parameters:

crs: An optional string value for CRS. It must be a geographic CRS, where

longitudes are bound by [-180, 180] and latitudes are bound by [-90, 90].

If unset, the CRS defaults to "OGC:CRS84".algorithm: An optional enum value to describes the edge interpolation

algorithm. Supported values are: SPHERICAL, VINCENTY, THOMAS, ANDOYER,

KARNEY. If unset, the algorithm defaults to SPHERICAL.The sort order used for GEOGRAPHY is undefined. When writing data, no min/max

statistics should be saved for this type and if such non-compliant statistics

are found during reading, they must be ignored.

This section specifies how LIST and MAP can be used to encode nested types

by adding group levels around repeated fields that are not present in the data.

This does not affect repeated fields that are not annotated: A repeated field

that is neither contained by a LIST- or MAP-annotated group nor annotated

by LIST or MAP should be interpreted as a required list of required

elements where the element type is the type of the field.

WARNING: writers should not produce list types like these examples! They are

just for the purpose of reading existing data for backward-compatibility.

// List<Integer> (non-null list, non-null elements)

repeated int32 num;

// List<Tuple<Integer, String>> (non-null list, non-null elements)

repeated group my_list {

required int32 num;

optional binary str (STRING);

}

For all fields in the schema, implementations should use either LIST and

MAP annotations or unannotated repeated fields, but not both. When using

the annotations, no unannotated repeated types are allowed.

LIST is used to annotate types that should be interpreted as lists.

LIST must always annotate a 3-level structure:

<list-repetition> group <name> (LIST) {

repeated group list {

<element-repetition> <element-type> element;

}

}

LIST that contains a

single field named list. The repetition of this level must be either

optional or required and determines whether the list is nullable.list, must be a repeated group with a single

field named element.element field encodes the list’s element type and repetition. Element

repetition must be required or optional.The following examples demonstrate two of the possible lists of string values.

// List<String> (list non-null, elements nullable)

required group my_list (LIST) {

repeated group list {

optional binary element (STRING);

}

}

// List<String> (list nullable, elements non-null)

optional group my_list (LIST) {

repeated group list {

required binary element (STRING);

}

}

Element types can be nested structures. For example, a list of lists:

// List<List<Integer>>

optional group array_of_arrays (LIST) {

repeated group list {

required group element (LIST) {

repeated group list {

required int32 element;

}

}

}

}

New writer implementations should always produce the 3-level LIST structure shown above. However, historically data files have been produced that use different structures to represent list-like data, and readers may include compatibility measures to interpret them as intended.

It is required that the repeated group of elements is named list and that

its element field is named element. However, these names may not be used in

existing data and should not be enforced as errors when reading. For example,

the following field schema should produce a nullable list of non-null strings,

even though the repeated group is named element.

optional group my_list (LIST) {

repeated group element {

required binary str (STRING);

};

}

Some existing data does not include the inner element layer, resulting in a

LIST that annotates a 2-level structure. Unlike the 3-level structure, the

repetition of a 2-level structure can be optional, required, or repeated.

When it is repeated, the LIST-annotated 2-level structure can only serve as

an element within another LIST-annotated 2-level structure.

For backward-compatibility, the type of elements in LIST-annotated structures

should always be determined by the following rules:

repeated repetition,

then its type is the element type and elements are required.array

or uses the LIST-annotated group’s name with _tuple appended then the

repeated type is the element type and elements are required.Examples that can be interpreted using these rules:

WARNING: writers should not produce list types like these examples! They are

just for the purpose of reading existing data for backward-compatibility.

// Rule 1: List<Integer> (nullable list, non-null elements)

optional group my_list (LIST) {

repeated int32 element;

}

// Rule 2: List<Tuple<String, Integer>> (nullable list, non-null elements)

optional group my_list (LIST) {

repeated group element {

required binary str (STRING);

required int32 num;

};

}

// Rule 3: List<List<Integer>> (nullable outer list, non-null elements)

optional group my_list (LIST) {

repeated group array (LIST) {

repeated int32 array;

};

}

// Rule 4: List<OneTuple<String>> (nullable list, non-null elements)

optional group my_list (LIST) {

repeated group array {

required binary str (STRING);

};

}

// Rule 4: List<OneTuple<String>> (nullable list, non-null elements)

optional group my_list (LIST) {

repeated group my_list_tuple {

required binary str (STRING);

};

}

// Rule 5: List<String> (nullable list, nullable elements)

optional group my_list (LIST) {

repeated group element {

optional binary str (STRING);

};

}

MAP is used to annotate types that should be interpreted as a map from keys

to values. MAP must annotate a 3-level structure:

<map-repetition> group <name> (MAP) {

repeated group key_value {

required <key-type> key;

<value-repetition> <value-type> value;

}

}

MAP that contains a

single field named key_value. The repetition of this level must be either

optional or required and determines whether the map is nullable.key_value, must be a repeated group with a key

field for map keys and, optionally, a value field for map values. It must

not contain any other values.key field encodes the map’s key type. This field must have

repetition required and must always be present. It must always be the first

field of the repeated key_value group.value field encodes the map’s value type and repetition. This field can

be required, optional, or omitted. It must always be the second field of

the repeated key_value group if present. In case of not present, it can be

represented as a map with all null values or as a set of keys.The following example demonstrates the type for a non-null map from strings to nullable integers:

// Map<String, Integer>

required group my_map (MAP) {

repeated group key_value {

required binary key (STRING);

optional int32 value;

}

}

If there are multiple key-value pairs for the same key, then the final value

for that key must be the last value. Other values may be ignored or may be

added with replacement to the map container in the order that they are encoded.

The MAP annotation should not be used to encode multi-maps using duplicate

keys.

It is required that the repeated group of key-value pairs is named key_value

and that its fields are named key and value. However, these names may not

be used in existing data and should not be enforced as errors when reading.

(key and value can be identified by their position in case of misnaming.)

Some existing data incorrectly used MAP_KEY_VALUE in place of MAP. For

backward-compatibility, a group annotated with MAP_KEY_VALUE that is not

contained by a MAP-annotated group should be handled as a MAP-annotated

group.

Examples that can be interpreted using these rules:

// Map<String, Integer> (nullable map, non-null values)

optional group my_map (MAP) {

repeated group map {

required binary str (STRING);

required int32 num;

}

}

// Map<String, Integer> (nullable map, nullable values)

optional group my_map (MAP_KEY_VALUE) {

repeated group map {

required binary key (STRING);

optional int32 value;

}

}

Sometimes, when discovering the schema of existing data, values are always null

and there’s no type information.

The UNKNOWN type can be used to annotate a column that is always null.

(Similar to Null type in Avro and Arrow)

The Variant type is designed to store and process semi-structured data efficiently, even with heterogeneous values.

Query engines encode each Variant value in a self-describing format, and store it as a group containing value and metadata binary fields in Parquet.

Since data is often partially homogeneous, it can be beneficial to extract certain fields into separate Parquet columns to further improve performance.

This process is called shredding.

Shredding enables the use of Parquet’s columnar representation for more compact data encoding, column statistics for data skipping, and partial projections.

For example, the query SELECT variant_get(event, '$.event_ts', 'timestamp') FROM tbl only needs to load field event_ts, and if that column is shredded, it can be read by columnar projection without reading or deserializing the rest of the event Variant.

Similarly, for the query SELECT * FROM tbl WHERE variant_get(event, '$.event_type', 'string') = 'signup', the event_type shredded column metadata can be used for skipping and to lazily load the rest of the Variant.

Variant metadata is stored in the top-level Variant group in a binary metadata column regardless of whether the Variant value is shredded.

All value columns within the Variant must use the same metadata.

All field names of a Variant, whether shredded or not, must be present in the metadata.

Variant values are stored in Parquet fields named value.

Each value field may have an associated shredded field named typed_value that stores the value when it matches a specific type.

When typed_value is present, readers must reconstruct shredded values according to this specification.

For example, a Variant field, measurement may be shredded as long values by adding typed_value with type int64:

required group measurement (VARIANT(1)) {

required binary metadata;

optional binary value;

optional int64 typed_value;

}

The Parquet columns used to store variant metadata and values must be accessed by name, not by position.

The series of measurements 34, null, "n/a", 100 would be stored as:

| Value | metadata | value | typed_value |

|---|---|---|---|

| 34 | 01 00 v1/empty | null | 34 |

| null | 01 00 v1/empty | 00 (null) | null |

| “n/a” | 01 00 v1/empty | 13 6E 2F 61 (n/a) | null |

| 100 | 01 00 v1/empty | null | 100 |

Both value and typed_value are optional fields used together to encode a single value.

Values in the two fields must be interpreted according to the following table:

value | typed_value | Meaning |

|---|---|---|

| null | null | The value is missing; only valid for shredded object fields |

| non-null | null | The value is present and may be any type, including null |

| null | non-null | The value is present and is the shredded type |

| non-null | non-null | The value is present and is a partially shredded object |

An object is partially shredded when the value is an object and the typed_value is a shredded object.

Writers must not produce data where both value and typed_value are non-null, unless the Variant value is an object.

If a Variant is missing in a context where a value is required, readers must return a Variant null (00): basic type 0 (primitive) and physical type 0 (null).

For example, if a Variant is required (like measurement above) and both value and typed_value are null, the returned value must be 00 (Variant null).

Shredded values must use the following Parquet types:

| Variant Type | Parquet Physical Type | Parquet Logical Type |

|---|---|---|

| boolean | BOOLEAN | |

| int8 | INT32 | INT(8, signed=true) |

| int16 | INT32 | INT(16, signed=true) |

| int32 | INT32 | |

| int64 | INT64 | |

| float | FLOAT | |

| double | DOUBLE | |

| decimal4 | INT32 | DECIMAL(P, S) |

| decimal8 | INT64 | DECIMAL(P, S) |

| decimal16 | BYTE_ARRAY / FIXED_LEN_BYTE_ARRAY | DECIMAL(P, S) |

| date | INT32 | DATE |

| time | INT64 | TIME(false, MICROS) |

| timestamptz(6) | INT64 | TIMESTAMP(true, MICROS) |

| timestamptz(9) | INT64 | TIMESTAMP(true, NANOS) |

| timestampntz(6) | INT64 | TIMESTAMP(false, MICROS) |

| timestampntz(9) | INT64 | TIMESTAMP(false, NANOS) |

| binary | BINARY | |

| string | BINARY | STRING |

| uuid | FIXED_LEN_BYTE_ARRAY[len=16] | UUID |

| array | GROUP; see Arrays below | LIST |

| object | GROUP; see Objects below |

Primitive values can be shredded using the equivalent Parquet primitive type from the table above for typed_value.

Unless the value is shredded as an object (see Objects), typed_value or value (but not both) must be non-null.

Arrays can be shredded by using a 3-level Parquet list for typed_value.

If the value is not an array, typed_value must be null.

If the value is an array, value must be null.

The list element must be a required group.

The element group can contain value and typed_value fields.

The element’s value field stores the element as Variant-encoded binary when the typed_value is not present or cannot represent it.

The typed_value field may be omitted when not shredding elements as a specific type.

The value field may be omitted when shredding elements as a specific type.

However, at least one of the two fields must be present.

For example, a tags Variant may be shredded as a list of strings using the following definition:

optional group tags (VARIANT(1)) {

required binary metadata;

optional binary value;

optional group typed_value (LIST) { # must be optional to allow a null list

repeated group list {

required group element { # shredded element

optional binary value;

optional binary typed_value (STRING);

}

}

}

}

All elements of an array must be present (not missing) because the array Variant encoding does not allow missing elements.

That is, either typed_value or value (but not both) must be non-null.

Null elements must be encoded in value as Variant null: basic type 0 (primitive) and physical type 0 (null).

The series of tags arrays ["comedy", "drama"], ["horror", null], ["comedy", "drama", "romance"], null would be stored as:

| Array | value | typed_value | typed_value...value | typed_value...typed_value |

|---|---|---|---|---|

["comedy", "drama"] | null | non-null | [null, null] | [comedy, drama] |

["horror", null] | null | non-null | [null, 00] | [horror, null] |

["comedy", "drama", "romance"] | null | non-null | [null, null, null] | [comedy, drama, romance] |

| null | 00 (null) | null |

Fields of an object can be shredded using a Parquet group for typed_value that contains shredded fields.

If the value is an object, typed_value must be non-null.

If the value is not an object, typed_value must be null.

Readers can assume that a value is not an object if typed_value is null and that typed_value field values are correct; that is, readers do not need to read the value column if typed_value fields satisfy the required fields.

Each shredded field in the typed_value group is represented as a required group that contains optional value and typed_value fields.

The value field stores the value as Variant-encoded binary when the typed_value cannot represent the field.

This layout enables readers to skip data based on the field statistics for value and typed_value.

The typed_value field may be omitted when not shredding fields as a specific type.

The value column of a partially shredded object must never contain fields represented by the Parquet columns in typed_value (shredded fields).

Readers may always assume that data is written correctly and that shredded fields in typed_value are not present in value.

As a result, reads when a field is defined in both value and a typed_value shredded field may be inconsistent.

For example, a Variant event field may shred event_type (string) and event_ts (timestamp) columns using the following definition:

optional group event (VARIANT(1)) {

required binary metadata;

optional binary value; # a variant, expected to be an object

optional group typed_value { # shredded fields for the variant object

required group event_type { # shredded field for event_type

optional binary value;

optional binary typed_value (STRING);

}

required group event_ts { # shredded field for event_ts

optional binary value;

optional int64 typed_value (TIMESTAMP(true, MICROS));

}

}

}

The group for each named field must use repetition level required.

A field’s value and typed_value are set to null (missing) to indicate that the field does not exist in the variant.

To encode a field that is present with a null value, the value must contain a Variant null: basic type 0 (primitive) and physical type 0 (null).

When both value and typed_value for a field are non-null, engines should fail.

If engines choose to read in such cases, then the typed_value column must be used.

Readers may always assume that data is written correctly and that only value or typed_value is defined.

As a result, reads when both value and typed_value are defined may be inconsistent with optimized reads that require only one of the columns.

The table below shows how the series of objects in the first column would be stored:

| Event object | value | typed_value | typed_value.event_type.value | typed_value.event_type.typed_value | typed_value.event_ts.value | typed_value.event_ts.typed_value | Notes |

|---|---|---|---|---|---|---|---|

{"event_type": "noop", "event_ts": 1729794114937} | null | non-null | null | noop | null | 1729794114937 | Fully shredded object |

{"event_type": "login", "event_ts": 1729794146402, "email": "user@example.com"} | {"email": "user@example.com"} | non-null | null | login | null | 1729794146402 | Partially shredded object |

{"error_msg": "malformed: ..."} | {"error_msg", "malformed: ..."} | non-null | null | null | null | null | Object with all shredded fields missing |

"malformed: not an object" | malformed: not an object | null | Not an object (stored as Variant string) | ||||

{"event_ts": 1729794240241, "click": "_button"} | {"click": "_button"} | non-null | null | null | null | 1729794240241 | Field event_type is missing |

{"event_type": null, "event_ts": 1729794954163} | null | non-null | 00 (field exists, is null) | null | null | 1729794954163 | Field event_type is present and is null |

{"event_type": "noop", "event_ts": "2024-10-24"} | null | non-null | null | noop | "2024-10-24" | null | Field event_ts is present but not a timestamp |

{ } | null | non-null | null | null | null | null | Object is present but empty |

| null | 00 (null) | null | Object/value is null | ||||

| missing | null | null | Object/value is missing | ||||

INVALID: {"event_type": "login", "event_ts": 1729795057774} | {"event_type": "login"} | non-null | null | login | null | 1729795057774 | INVALID: Shredded field is present in value |

INVALID: {"event_type": "login"} | {"event_type": "login"} | null | INVALID: Shredded field is present in value, while typed_value is null | ||||

INVALID: "a" | "a" | non-null | null | null | null | null | INVALID: typed_value is present and value is not an object |

INVALID: {} | 02 00 (object with 0 fields) | null | INVALID: typed_value is null for object |

Invalid cases in the table above must not be produced by writers.

Readers must return an object when typed_value is non-null containing the shredded fields.

The typed_value associated with any Variant value field can be any shredded type, as shown in the sections above.

For example, the event object above may also shred sub-fields as object (location) or array (tags).

optional group event (VARIANT(1)) {

required binary metadata;

optional binary value;

optional group typed_value {

required group event_type {

optional binary value;

optional binary typed_value (STRING);

}

required group event_ts {

optional binary value;

optional int64 typed_value (TIMESTAMP(true, MICROS));

}

required group location {

optional binary value;

optional group typed_value {

required group latitude {

optional binary value;

optional double typed_value;

}

required group longitude {

optional binary value;

optional double typed_value;

}

}

}

required group tags {

optional binary value;

optional group typed_value (LIST) {

repeated group list {

required group element {

optional binary value;

optional binary typed_value (STRING);

}

}

}

}

}

}

Statistics for typed_value columns can be used for file, row group, or page skipping when value is always null (missing).

When the corresponding value column is all nulls, all values must be the shredded typed_value field’s type.

Because the type is known, comparisons with values of that type are valid.

IS NULL/IS NOT NULL and IS NAN/IS NOT NAN filter results are also valid.

Comparisons with values of other types are not necessarily valid and data should not be skipped.

Casting behavior for Variant is delegated to processing engines. For example, the interpretation of a string as a timestamp may depend on the engine’s SQL session time zone.

It is possible to recover an unshredded Variant value using a recursive algorithm, where the initial call is to construct_variant with the top-level Variant group fields.

def construct_variant(metadata: Metadata, value: Variant, typed_value: Any) -> Variant:

"""Constructs a Variant from value and typed_value"""

if typed_value is not None:

if isinstance(typed_value, dict):

# this is a shredded object

object_fields = {

name: construct_variant(metadata, field.value, field.typed_value)

for (name, field) in typed_value

}

if value is not None:

# this is a partially shredded object

assert isinstance(value, VariantObject), "partially shredded value must be an object"

assert typed_value.keys().isdisjoint(value.keys()), "object keys must be disjoint"

# union the shredded fields and non-shredded fields

return VariantObject(metadata, object_fields).union(VariantObject(metadata, value))

else:

return VariantObject(metadata, object_fields)

elif isinstance(typed_value, list):

# this is a shredded array

assert value is None, "shredded array must not conflict with variant value"

elements = [

construct_variant(metadata, elem.value, elem.typed_value)

for elem in list(typed_value)

]

return VariantArray(metadata, elements)

else:

# this is a shredded primitive

assert value is None, "shredded primitive must not conflict with variant value"

return primitive_to_variant(typed_value)

elif value is not None:

return Variant(metadata, value)

else:

# value is missing

return None

def primitive_to_variant(typed_value: Any): Variant:

if isinstance(typed_value, int):

return VariantInteger(typed_value)

elif isinstance(typed_value, str):

return VariantString(typed_value)

...

Shredding is an optional feature of Variant, and readers must continue to be able to read a group containing only value and metadata fields.

Engines that do not write shredded values must be able to read shredded values according to this spec or must fail.

Different files may contain conflicting shredding schemas.

That is, files may contain different typed_value columns for the same Variant with incompatible types.

It may not be possible to infer or specify a single shredded schema that would allow all Parquet files for a table to be read without reconstructing the value as a Variant.

A Variant represents a type that contains one of:

A Variant is encoded with 2 binary values, the value and the metadata.

There are a fixed number of allowed primitive types, provided in the table below. These represent a commonly supported subset of the logical types allowed by the Parquet format.

The Variant Binary Encoding allows representation of semi-structured data (e.g. JSON) in a form that can be efficiently queried by path. The design is intended to allow efficient access to nested data even in the presence of very wide or deep structures.

Another motivation for the representation is that (aside from metadata) each nested Variant value is contiguous and self-contained. For example, in a Variant containing an Array of Variant values, the representation of an inner Variant value, when paired with the metadata of the full variant, is itself a valid Variant.

This document describes the Variant Binary Encoding scheme. Variant fields can also be shredded. Shredding refers to extracting some elements of the variant into separate columns for more efficient extraction/filter pushdown. The Variant Shredding specification describes the details of shredding Variant values as typed Parquet columns.

A Variant value in Parquet is represented by a group with 2 fields, named value and metadata.

VARIANT logical type.value and metadata must be of type binary (called BYTE_ARRAY in the Parquet thrift definition).metadata field is required and must be a valid Variant metadata, as defined below.value field must be annotated as required for unshredded Variant values, or optional if parts of the value are shredded as typed Parquet columns.value field must be a valid Variant value, as defined below.This is the expected unshredded representation in Parquet:

optional group variant_name (VARIANT(1)) {

required binary metadata;

required binary value;

}

This is an example representation of a shredded Variant in Parquet:

optional group shredded_variant_name (VARIANT(1)) {

required binary metadata;

optional binary value;

optional int64 typed_value;

}

The VARIANT annotation places no additional restrictions on the repetition of Variant groups, but repetition may be restricted by containing types (such as MAP and LIST).

The Variant group name is the name of the Variant column.

The encoded metadata always starts with a header byte.

7 6 5 4 3 0

+-------+---+---+---------------+

header | | | | version |

+-------+---+---+---------------+

^ ^

| +-- sorted_strings

+-- offset_size_minus_one

The version is a 4-bit value that must always contain the value 1.

sorted_strings is a 1-bit value indicating whether dictionary strings are sorted and unique.

offset_size_minus_one is a 2-bit value providing the number of bytes per dictionary size and offset field.

The actual number of bytes, offset_size, is offset_size_minus_one + 1.

The entire metadata is encoded as the following diagram shows:

7 0

+-----------------------+

metadata | header |

+-----------------------+

| |

: dictionary_size : <-- unsigned little-endian, `offset_size` bytes

| |

+-----------------------+

| |

: offset : <-- unsigned little-endian, `offset_size` bytes

| |

+-----------------------+

:

+-----------------------+

| |

: offset : <-- unsigned little-endian, `offset_size` bytes

| | (`dictionary_size + 1` offsets)

+-----------------------+

| |

: bytes :

| |

+-----------------------+

The metadata is encoded first with the header byte, then dictionary_size which is an unsigned little-endian value of offset_size bytes, and represents the number of string values in the dictionary.

Next, is an offset list, which contains dictionary_size + 1 values.

Each offset is an unsigned little-endian value of offset_size bytes, and represents the starting byte offset of the i-th string in bytes.

The first offset value will always be 0, and the last offset value will always be the total length of bytes.

The last part of the metadata is bytes, which stores all the string values in the dictionary.

All string values must be UTF-8 encoded strings.

The grammar for encoded metadata is as follows

metadata: <header> <dictionary_size> <dictionary>

header: 1 byte (<version> | <sorted_strings> << 4 | (<offset_size_minus_one> << 6))

version: a 4-bit version ID. Currently, must always contain the value 1

sorted_strings: a 1-bit value indicating whether metadata strings are sorted

offset_size_minus_one: 2-bit value providing the number of bytes per dictionary size and offset field.

dictionary_size: `offset_size` bytes. unsigned little-endian value indicating the number of strings in the dictionary

dictionary: <offset>* <bytes>

offset: `offset_size` bytes. unsigned little-endian value indicating the starting position of the ith string in `bytes`. The list should contain `dictionary_size + 1` values, where the last value is the total length of `bytes`.

bytes: UTF-8 encoded dictionary string values

Notes:

bytes array.offset[i+1] - offset[i].offset_size_minus_one indicates the number of bytes per dictionary_size and offset entry. I.e. a value of 0 indicates 1-byte offsets, 1 indicates 2-byte offsets, 2 indicates 3 byte offsets and 3 indicates 4-byte offsets.sorted_strings is set to 1, strings in the dictionary must be unique and sorted in lexicographic order. If the value is set to 0, readers may not make any assumptions about string order or uniqueness.The entire encoded Variant value includes the value_metadata byte, and then 0 or more bytes for the val.

7 2 1 0

+------------------------------------+------------+

value | value_header | basic_type | <-- value_metadata

+------------------------------------+------------+

| |

: value_data : <-- 0 or more bytes

| |

+-------------------------------------------------+

The basic_type is 2-bit value that represents which basic type the Variant value is.

The basic types table shows what each value represents.

The value_header is a 6-bit value that contains more information about the type, and the format depends on the basic_type.

basic_type=0)When basic_type is 0, value_header is a 6-bit primitive_header.

The primitive types table shows what each value represents.

5 0

+-----------------------+

value_header | primitive_header |

+-----------------------+

basic_type=1)When basic_type is 1, value_header is a 6-bit short_string_header.

5 0

+-----------------------+

value_header | short_string_header |

+-----------------------+

The short_string_header value is the length of the string.

basic_type=2)When basic_type is 2, value_header is made up of field_offset_size_minus_one, field_id_size_minus_one, and is_large.

5 4 3 2 1 0

+---+---+-------+-------+

value_header | | | | |

+---+---+-------+-------+

^ ^ ^

| | +-- field_offset_size_minus_one

| +-- field_id_size_minus_one

+-- is_large

field_offset_size_minus_one and field_id_size_minus_one are 2-bit values that represent the number of bytes used to encode the field offsets and field ids.

The actual number of bytes is computed as field_offset_size_minus_one + 1 and field_id_size_minus_one + 1.

is_large is a 1-bit value that indicates how many bytes are used to encode the number of elements.

If is_large is 0, 1 byte is used, and if is_large is 1, 4 bytes are used.

basic_type=3)When basic_type is 3, value_header is made up of field_offset_size_minus_one, and is_large.

5 3 2 1 0

+-----------+---+-------+

value_header | | | |

+-----------+---+-------+

^ ^

| +-- field_offset_size_minus_one

+-- is_large

field_offset_size_minus_one is a 2-bit value that represents the number of bytes used to encode the field offset.

The actual number of bytes is computed as field_offset_size_minus_one + 1.

is_large is a 1-bit value that indicates how many bytes are used to encode the number of elements.

If is_large is 0, 1 byte is used, and if is_large is 1, 4 bytes are used.

The value_data encoding format depends on the type specified by value_metadata.

For some types, the value_data will be 0-bytes.

basic_type=0)When basic_type is 0, value_data depends on the primitive_header value.

The primitive types table shows the encoding format for each primitive type.

basic_type=1)When basic_type is 1, value_data is the sequence of UTF-8 encoded bytes that represents the string.

basic_type=2)When basic_type is 2, value_data encodes an object.

The encoding format is shown in the following diagram:

7 0

+-----------------------+

object value_data | |

: num_elements : <-- unsigned little-endian, 1 or 4 bytes

| |

+-----------------------+

| |

: field_id : <-- unsigned little-endian, `field_id_size` bytes

| |

+-----------------------+

:

+-----------------------+

| |

: field_id : <-- unsigned little-endian, `field_id_size` bytes

| | (`num_elements` field_ids)

+-----------------------+

| |

: field_offset : <-- unsigned little-endian, `field_offset_size` bytes

| |

+-----------------------+

:

+-----------------------+